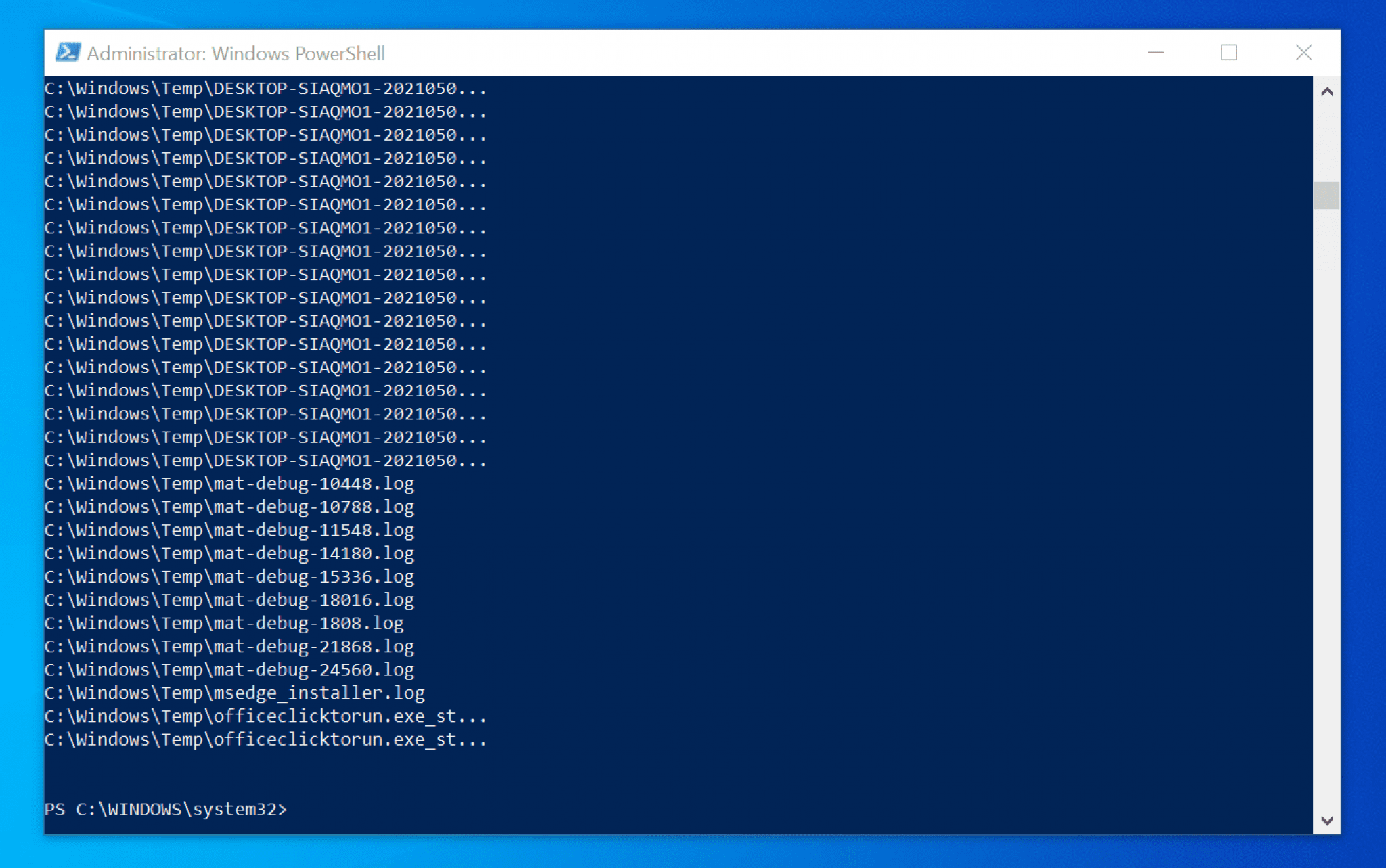

The retranscoding process is what adds the v and number to the end of the file or folder. The software has changed so now everything new is contained within folders but older content is just in the root as mp4 files until retranscoded. \All-Duplicate-Files.csv you find all duplicate files. (All of the files and folders are generated by a system creating Video On Demand Files. The script ask you for folder to scan, and make an inventur of Files with MD5 cheksum. The User ID field needs to be a unique numeric number for each entry. csv file Posted by Reuben8973 on Sep 5th, 2020 at 2:19 AM Needs answer PowerShell Hi guys, I have been tasked to find duplicates in a CSV file, remove the duplicates from the 'master file' and write it to a new file. Just a little more background on the purpose if you are curious… Powershell script to find duplicate entries in a. This command will search for and select any duplicates of Outlook emails in the folder, and then delete them. Type the command Remove-Item -Force and press enter. I found more old unnecessary duplicate files. msg Sort-Object -Property Subject Select-Object -Unique into the PowerShell window and press enter. Removing the filtering does combine both files and folders and is additionally helpful. $names | Group-Object -Property FirstPart | Where-Object | Now duplicates should have been removed.Folders: $InputFolderNameList = Get-ChildItem "E:\VODContent" -Recurse -Directoryįoreach ($InputFolderName in $InputFolderNameList) = $InputFolderNameįirstPart = ($InputFolderName -split '-') In each duplicate object set, one file is skipped and not retained in the list that is sent to Remove-Item to delete the file. For Windows, there are a large number of utilities for finding and removing duplicate files, but most of them are paid or poorly suited for automation. The grouped objects are then sent to ForEach-Object which creates a loop to run through each set of duplicate files. For one of the projects, we needed a PowerShell script that would find duplicate files in the network folders of the server. This will identify only the duplicated files from the list. The grouped list is then sent to Where-Object which counts the list for file hashes where the value is greater than one. The file hashes are then grouped by the hash number with Group-Object so the duplicate file hashes will be next to each other in the list. This list is then sent to the Get-FileHash command which retrieves the file hash for each file in the list. In the View Settings, you can click the Sort. However, when I group by DisplayName and look for a count greater than 1, PowerShell is slower than Excel (which crashes intermittently). Running Import-Csv and selecting a few attributes, including DisplayName, all happens very quickly. The first step is to get a list of the files that are duplicated, this is done with Get-ChildItem and the path and name of the files. Then click on the header of the desired column, for example, Size, to compare messages and remove duplicates. Fastest way to search for duplicates I have a. Get-ChildItem “C:\FilePath\*FileName.file” | Get-FileHash | Group- Object -property hash | Where- Object | Remove-Item -Verbose On macOS, click Find duplicates -> Find, select the locations, and click Scan.

Here is the complete operation to remove duplicate files with an explanation that follows. On Windows, click Tools -> Duplicate Finder -> Search. For files that are exact copies of each other, the file hash is the same, so the operation is to find the file hashes for a group of files and remove only one of the duplicates.

How can you remove the duplicate files while avoiding the painstaking work of sorting through each one? With PowerShell and file hashes, the answer is, surprisingly easy. Storage space is tight, and you do not want to keep duplicate copies. So, you have a folder full of files, the names look similar, and you’re not sure what is in them.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed